Generative AI Tech Stack: Tools, Models & Frameworks Explained

Table of Contents

Subscribe To Our Newsletter

Generative AI has quickly moved from experimental labs into real business environments. What started as curiosity-driven tools is now powering enterprise workflows, customer experiences, and even core product offerings. But behind every AI chatbot, content generator, or intelligent assistant lies something far more important than the interface itself the generative AI tech stack.

Most conversations around AI still revolve around models. People talk about GPT, LLaMA, or Claude as if choosing a model is the entire solution. In reality, that’s just one layer. The real strength of any AI system comes from how well the entire stack is designed, integrated, and scaled.

If you’re building or planning to build AI-driven products in 2026, understanding the full stack isn’t optional anymore. It’s the difference between a demo and a deployable system.

Let’s break this down in a way that actually reflects how modern AI systems are built.

What Is a Generative AI Tech Stack?

At a practical level, the generative AI tech stack is a structured combination of technologies that work together to create, process, and deliver AI-generated outputs.

Instead of thinking about it as one tool, think of it as a pipeline:

- Data flows in

- Models process it

- Frameworks structure it

- Applications deliver it

- Infrastructure supports it

This layered system is what enables AI to move beyond isolated experiments into scalable business solutions.

For businesses, this stack directly influences:

- How accurate and relevant outputs are

- How fast systems respond

- How expensive it is to run AI workloads

- How easily the system can scale

That’s why companies investing in enterprise generative AI solutions are now focusing more on architecture than just models.

Build AI That Actually Works at Scale

Design a production-ready generative AI stack tailored for your business.

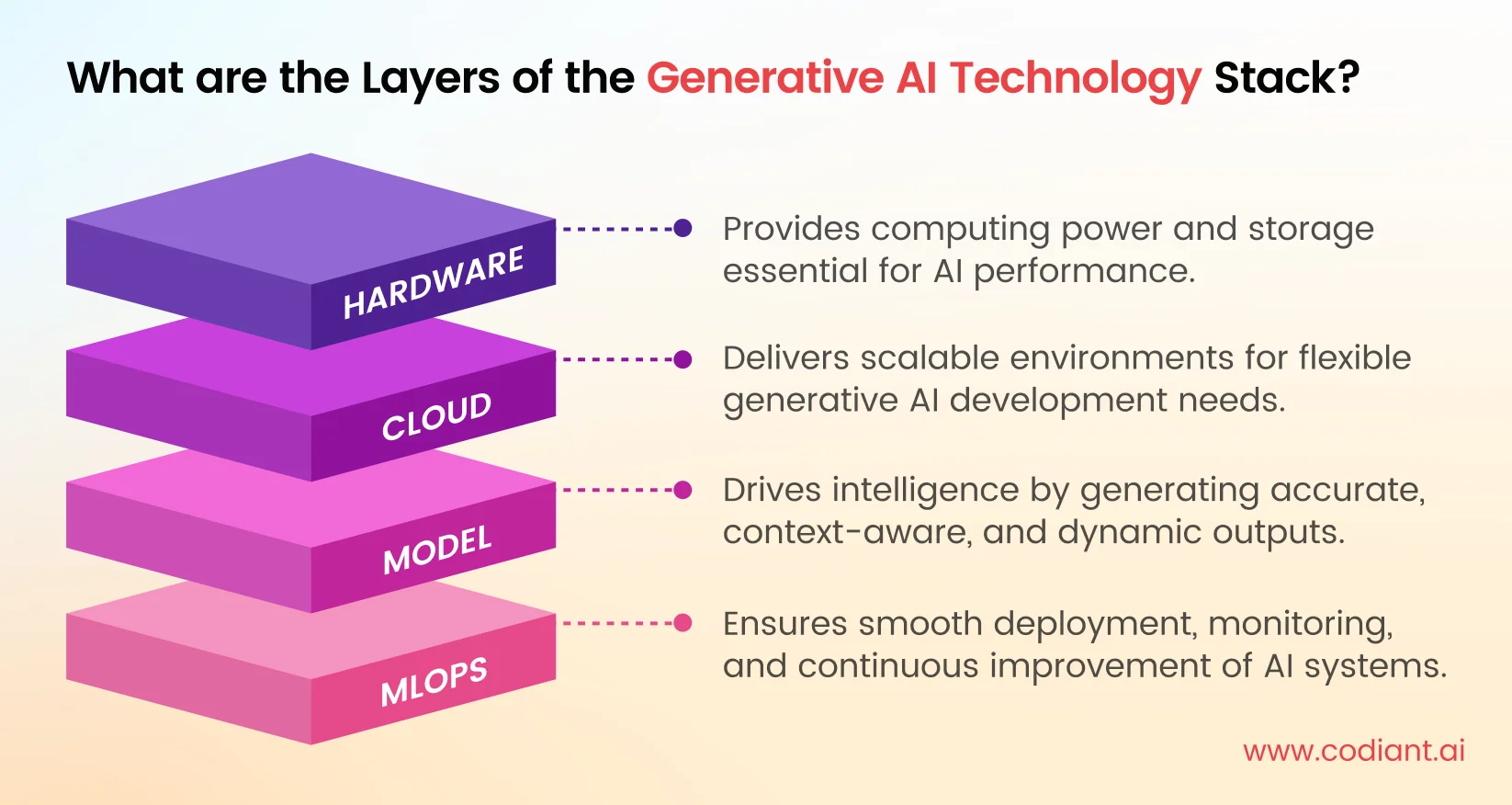

What are the Layers of the Generative AI Technology Stack?

- Hardware: Hardware forms the base of generative AI systems, providing processing power through GPUs and servers that handle large computations, training models, and running AI applications efficiently.

- Cloud: Cloud platforms provide scalable environments where AI systems are built, deployed, and managed, allowing businesses to access computing resources without investing heavily in physical infrastructure.

- Model: Models are the core intelligence of generative AI, trained on large datasets to understand patterns and generate outputs like text, images, or code based on given inputs.

- MLOps: MLOps manages the lifecycle of AI models, ensuring smooth deployment, monitoring, updates, and performance tracking, so systems remain reliable, accurate, and continuously improve over time.

The Architecture Behind Generative AI Systems

To understand how everything connects, you need to look at the generative AI architecture itself.

Modern AI systems are not monolithic. They are modular by design, which allows flexibility and scalability.

1. Data Layer: The Starting Point

Everything begins with data. Without structured and meaningful data, even the most advanced models fail to perform.

This layer includes:

- Raw data collection (documents, text, images, logs)

- Data cleaning and preprocessing

- Annotation and labeling

In enterprise environments, this often includes internal knowledge bases, CRM data, and operational datasets. The quality of this layer directly impacts output quality.

2. Model Layer: The Intelligence Core

This is where transformation happens. Models take input and generate meaningful output.

Examples include:

- Large Language Models (LLMs) for text

- Diffusion models for images

- Speech models for audio

This is where comparisons like OpenAI vs HuggingFace vs LLaMA become important.

Each model ecosystem represents a different philosophy:

- OpenAI focuses on simplicity and performance through APIs

- Hugging Face provides flexibility and open experimentation

- LLaMA offers control and cost-efficiency through self-hosting

Choosing the right model isn’t about popularity. It’s about alignment with your business needs.

3. Framework Layer: The Real Enabler

This is one of the most underestimated parts of the stack.

Frameworks are what turn raw models into usable systems. Without them, AI developers would have to manually handle prompts, context, memory, and workflows.

Popular AI model frameworks include:

- LangChain

- LlamaIndex

- Haystack

- Semantic Kernel

These frameworks help with:

- Managing multi-step reasoning

- Connecting models with external data

- Structuring prompt flows

- Handling memory and context

When teams evaluate LLM frameworks comparison, they’re not just comparing features they’re deciding how complex their development process will be.

4. Application Layer: Where Users Interact

This is the visible part of the system.

It includes:

- Chat interfaces

- AI copilots

- Content generation platforms

- Automation dashboards

At this layer, the focus shifts from technology to user experience. The best AI systems are not the ones with the most powerful models, but the ones that deliver intuitive, fast, and reliable interactions.

5. Infrastructure Layer: The Backbone

Everything runs on infrastructure.

This includes:

- Cloud providers (AWS, Azure, GCP)

- GPUs and compute clusters

- APIs and deployment systems

- Containerization tools like Docker

A poorly designed generative AI infrastructure leads to high costs, slow performance, and scaling issues. That’s why enterprises invest heavily in optimizing this layer.

Tools That Power the Generative AI Ecosystem

When people ask about a generative AI tools list, they’re often expecting a simple set of tools. But in reality, tools are distributed across multiple functions.

1. Development Tools

These are used to build and interact with models:

- OpenAI API

- Hugging Face Transformers

- Cohere

- Replicate

They allow developers to quickly integrate AI capabilities without building models from scratch.

2. Orchestration Tools

These tools structure workflows:

- LangChain

- LlamaIndex

They help connect models with data sources, APIs, and external systems.

3. Vector Databases

One of the most critical components in modern AI systems.

Tools like:

- Pinecone

- Weaviate

- FAISS

These databases store embeddings and enable semantic search. This is what allows AI systems to retrieve relevant context instead of generating generic responses.

4. Monitoring and Evaluation Tools

AI systems are not static. They require continuous monitoring.

Tools include:

- Weights & Biases

- Arize AI

These help track performance, detect issues, and improve outputs over time.

5. Experimentation Environments

For testing and prototyping:

- Jupyter Notebook

- Google Colab

These environments allow teams to quickly iterate and test ideas before production deployment.

Read more: Top 20 Generative AI Development Companies in the USA (2026)

OpenAI vs HuggingFace vs LLaMA: A Practical Perspective

This comparison is less about “which is better” and more about “which fits your use case.”

OpenAI

- Best for fast deployment

- Minimal setup

- Reliable outputs

- Limited customization

Hugging Face

- Ideal for experimentation

- Wide range of models

- Requires technical expertise

LLaMA

- Open-weight models

- Full control over deployment

- Cost-effective at scale

For startups, API-first solutions like OpenAI often make sense. For enterprises, hybrid or self-hosted approaches using LLaMA are becoming more common.

How a Generative AI System Is Built (Real Workflow)

Understanding how systems are built gives clarity on how the stack fits together.

It usually starts with a business problem:

- Automating customer support

- Generating content

- Building AI assistants

Once the use case is clear, teams select a model based on performance and cost. Then comes the data layer feeding the system with relevant knowledge.

Frameworks are used to structure interactions, while vector databases enable context-aware responses. The system is then deployed on cloud infrastructure and continuously monitored.

This is how modern generative AI applications move from idea to production.

Turn AI Ideas into Real-World Applications

From models to deployment, build scalable generative AI solutions faster.

What Makes a Strong AI Development Stack?

There is no universal stack, but strong systems share common characteristics.

A typical high-performing AI development stack includes:

- A reliable model (GPT, LLaMA)

- A framework for orchestration (LangChain)

- A vector database (Pinecone)

- A backend system (Python APIs)

- A frontend interface (React)

- Scalable infrastructure (cloud platforms)

What matters is not the tools themselves, but how well they integrate.

Enterprise-Level Generative AI Stack

For enterprises, the stakes are higher.

A typical LLM tech stack enterprise setup includes:

- Fine-tuned or custom models

- Private data pipelines

- Secure APIs

- Compliance and governance layers

- Advanced monitoring systems

Enterprises prioritize:

- Data security

- Regulatory compliance

- Scalability

- Reliability

Because even minor failures can have large-scale impact.

Models vs Frameworks: Clearing the Confusion

This is one of the most misunderstood areas in AI.

A simple way to understand it:

- Models generate outputs

- Frameworks help build systems around those models

For example:

- GPT generates text

- LangChain helps structure how that text is generated

Both are essential, but they serve completely different roles.

Choosing the Right Stack: What Businesses Actually Consider

When businesses evaluate their generative AI tech stack, they’re not just looking at features.

They consider:

- Cost of deployment and scaling

- Speed of development

- Data sensitivity

- Long-term flexibility

For example:

- A startup might prioritize speed and choose API-based solutions

- A large enterprise might prioritize control and choose self-hosted models

The “right” stack is always context-driven.

Where Generative AI Tech Stacks Are Headed

The evolution of AI stacks is happening fast.

Some clear trends in 2026 include:

- Smaller, optimized models replacing large ones

- Increasing adoption of open-source ecosystems

- Rise of multi-modal AI systems

- Growing use of AI agents for autonomous workflows

The focus is shifting from isolated tools to integrated ecosystems.

Related reading: AI Strategy for CTOs- When to Invest in Machine Learning, NLP & Computer Vision

Conclusion

Generative AI is not just about models anymore. It’s about how everything connects from data pipelines to infrastructure, from frameworks to applications.

A well-designed generative AI tech stack doesn’t just improve performance. It defines how scalable, efficient, and reliable your AI solution will be.

If you’re building AI systems today, the real question isn’t:

“Which model should we use?”

It’s:

“How do we design a stack that actually works in the real world?”

Because that’s where long-term value is created.

Choose the Right AI Stack Without Guesswork

Get expert guidance on tools, models, and frameworks for success.

Frequently Asked Questions

It includes data pipelines, AI models, frameworks, vector databases, infrastructure, and application layers working together to build scalable AI systems.

LangChain, LlamaIndex, Haystack, and Semantic Kernel are widely used for building structured and scalable AI applications.

Common tools include OpenAI APIs, Hugging Face, LangChain, vector databases like Pinecone, and monitoring platforms like Weights & Biases.

They evaluate use case complexity, cost, scalability, data privacy, and internal expertise before selecting the most suitable stack.

Models generate outputs, while frameworks help developers build workflows, manage logic, and integrate models into applications.

Featured Blogs

Read our thoughts and insights on the latest tech and business trends

What is NLP? Use Cases & Applications (2026 Guide)

- May 25, 2026

- NLP

You’ve already used NLP today. Maybe you asked Google a question. Maybe you dictated a WhatsApp message. Maybe a chatbot solved your issue faster than a human ever could. None of that works without Natural... Read more

Best AI Chatbot Development Companies in USA for 2026

- May 20, 2026

- AI Chatbot Development

Customer conversations have changed and so have expectations. People don’t want to wait. They don’t want to repeat themselves. And they definitely don’t want to navigate complicated support systems just to get a simple answer.... Read more

How to Build an AI Chatbot Using NLP?

- May 14, 2026

- AI Chatbot Development

The majority of businesses nowadays are looking to shorten response time, manage more queries than ever before and be available for customers without the need to increase team size. Chatbots are one of the practical... Read more